Flying & singing zebra finches - Birdpark#

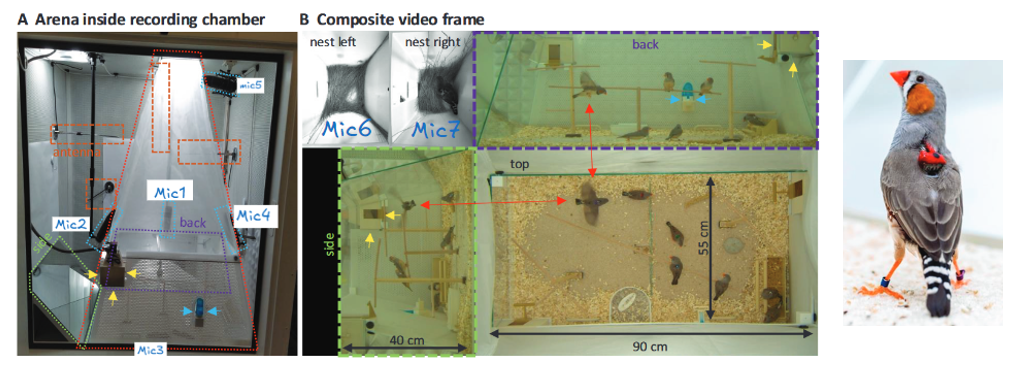

Data from Rüttimann et al. (2024)¹ (paper, zenodo), a multimodal dataset of zebra finch groups with synchronized video, microphone arrays, and backpack-mounted vibration transducer (accelerometers).

The code below shows how one can convert that existing dataset into the Trials.nc format. The sampling rate of the vibration transducer is very high (24kHz), therefore if the bottom plot loads very slowly, you may consider using the Downsample button in I/O.

¹ Rüttimann, L., Wang, Y., Rychen, J., Tomka, T., Hörster, H., & Hahnloser, R. H. R. (2025). Multimodal system for recording individual-level behaviors in songbird groups. PeerJ, 13, e20203. https://doi.org/10.7717/peerj.20203

Left: GUI screenshot, Right: Adapted from Rüttimann et al. (2024)¹ - Fig. 2C

import numpy as np

import xarray as xr

import h5py

import pandas as pd

import requests

import zipfile

from pathlib import Path

from audioio import write_audio

import ethograph as eto

from ethograph.io.nwb_alignment import align_media_per_trial

Explore snippet#

Example data from the birdpark dataset (a 7-second snippet from the copExpBP08 recording) is available in:

data/examples/copExpBP08_trim.mp4- Video filedata/examples/copExpBP08_trim.wav- Audio filedata/examples/copExpBP08_trim.nc- Accelerometer data and metadata

You can open these files directly from the GUI to explore the dataset.

Download dataset#

You can download the entire dataset from here or use the code below. I only tested the copExpBP08 recording. If problems arise, the ReadMe here is very helpful.

try:

_here = Path(__vsc_ipynb_file__).parent

except NameError:

_here = Path().resolve()

data_folder = _here.parent / "data" / "birdpark"

data_folder.mkdir(parents=True, exist_ok=True)

response = requests.get("https://zenodo.org/api/records/13144875")

data = response.json()

for file in data["files"]:

if file["checksum"] == "md5:32d1ae6049556c803f68b6d354c952ca":

print(f"Checksum matches: {file['key']}")

output_path = data_folder / file["key"]

r = requests.get(file["links"]["self"], stream=True)

with open(output_path, "wb") as f:

for chunk in r.iter_content(chunk_size=8192):

f.write(chunk)

if output_path.suffix == ".zip":

with zipfile.ZipFile(output_path, "r") as zip_ref:

zip_ref.extractall(data_folder)

break

Build NWB alignment and dataset#

# Recording: copExpBP08, session BP_2021-05-25_08-12-51_655154_0380000

fps = 47.6837158203125

audio_sr = 24414.0625 # audio and accelerometer sampling rate (from dataset metadata)

h5_path = data_folder / "copExpBP08" / "BP_2021-05-25_08-12-51_655154_0380000.h5"

video_path = data_folder / "copExpBP08" / "BP_2021-05-25_08-12-51_655154_0380000.mp4"

audio_path = video_path.with_suffix(".wav")

nc_path = video_path.with_suffix(".nc")

# Read H5 file

with h5py.File(h5_path, "r") as f1:

radioSignals = f1["/radioSignals"][()] # accelerometer (one row per channel)

daqSignals = f1["/daqSignals"][()] # microphone channels

# Create .wav file from microphone channels

write_audio(audio_path, daqSignals.T, audio_sr)

# ─── Build session table ───

# trial=1 matches the ID assigned when loading a plain Dataset as a single-trial TrialTree

session_table = pd.DataFrame({

"trial": [1],

"video_0": [str(video_path)],

"audio_0": [str(audio_path)],

})

nwb_path = data_folder / "copExpBP08" / ".ethograph" / "alignment.nwb"

align_media_per_trial(

trial_table=session_table,

stream_rates={"video": float(fps), "audio": float(audio_sr)},

output_path=nwb_path,

)

# ─── Build xarray dataset ───

time_coords = np.arange(radioSignals.shape[1]) / audio_sr

ds = xr.Dataset(

data_vars={

"vibration": xr.DataArray(

radioSignals.T,

dims=["time", "individuals"],

),

},

coords={

"time": time_coords,

"individuals": ["male (red radio)", "female (yellow radio)"], # specific to copExpBP08

},

attrs={

"fps": fps,

"audio_sr": audio_sr,

},

)

ds.to_netcdf(nc_path)

print(f"Saved to {nc_path}")

ds # Inspect

Decent for segmentation#

voc.segment.meansquared(

<data>, # set by GUI

<sr>, # set by GUI

threshold=15000,

min_dur=0.003,

min_silent_dur=0.0001,

freq_cutoffs=(500, 10000),

smooth_win=0.32,

scale=True,

scale_val=32768,

)